Master internship Cheops - Geo-distribute Cloud applications - Filled -

This position has been filled

Global information

Start: ASAP

Supervision: Marie Delavergne, PhD student, Stack research group (IMT Atlantique, Inria, LS2N)

Team: Stack

Keywords: resource management, distributed systems, edge computing, network disconnections, modularity, geo-distribution

Background

The emergence and ubiquity of cases related to applications for the internet of things and other smart-*, industry 4.0, virtual or augmented reality and other latency sensitive applications is driving the deployment of edge infrastructures. However, resolving the problems related to these infrastructures (latency between nodes and intermittent connectivity) is very complex.

Creating new applications complying to these infrastructures is possible, but involves entangling the code related to the geo-distribution to the business code [1, 2]. In addition, due to frequent risks of isolation of an edge site from the rest of the infrastructure, a federated approach presents a significant advantage: each site can continue to operate locally, independently of the others, and join the system when it can.

In order to reduce overhead costs related to geodistribution aspects (i.e. federation mechanisms), STACK members have proposed in 2019 to study a new composition model to provide on-demand collaboration between multiple instances of the same application. By exploiting the dynamic composition of Cloud applications services and a specific language for the domain (DSL), Admins/DevOps can specify, on a per request basis, which services from which instances should be used to execute the request. This information is then interpreted to dynamically recompose the services between the different instances thanks to a reverse proxy. A first proof of concept has has been implemented on the framework of OpenStack, the open source de facto solution to operate and use data centers [1, 3].

One of the key concept of this project is to leverage service-based cloud applications modularity to manage the geo-distribution outside of the application domain, like it was done with the deployement, monitoring, auto-scaling, etc. This separation of concerns is key to distinguish the work of the developer of the application and the operator that will deploy and manage the application on the infrastructure.

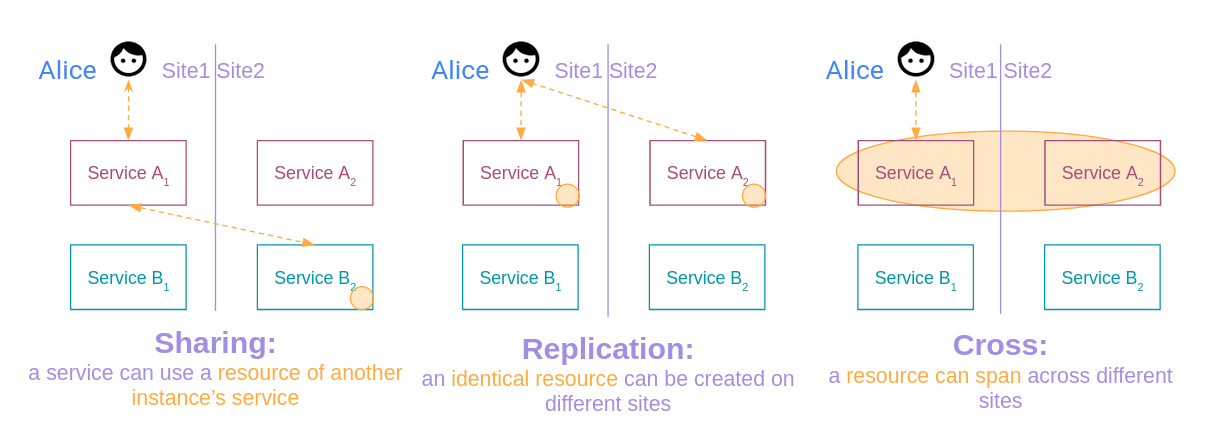

To be able to exploit existing Cloud applications and put them on the Edge, we use the principles of modularity: - genericity: the approach must be generic to any application - externality: the approach must be external to the concerns of the application (ideally contained in a module itself) but also the principles of peer-to-peer (P2P) applications: - autonomous instances : the application instance must be able to operate by itself in isolation - collaboration: the instances must be able to collaborate between them according to 3 types of collaboration (see Figure 1): - sharing: a service can use a resource of a service from another instance - replication: an identical resource can be created on different sites - cross: a resource can span across different sites.

Figure 1: The different types of collaboration

Figure 1: The different types of collaboration

Project/Objective

Cheops is our building block for these collaborations: it uses Scope-Lang, the DSL created for the dynamic composition [3], and enriches it with different operators to allow this collaboration.

The goal of this internship will be to contribute to the development of this tool with respect to the above principles in order to achieve collaboration between the instances.

Depending on the intern’s affinities, several angles and parts of the development can be addressed, such as the management of the module, the autonomic loops, the drivers necessary to the application, how to attach Cheops to a mesh service, etc.

Our two current use cases are OpenStack and Kubernetes, therefore all knowledge about them is a plus!

The duration of the internship is 6 months. The allowance is around 500 euros/month.

Required skills

- Knowledge of programming languages and software engineering

- English

- Curiosity and a taste for reading

- Autonomy

Skills to be acquired

- Operation and knowledge of different databases with different levels of consistency

- In-depth knowledge of how distributed applications work

- Experimental capabilities on computing grids

Contact

The internship will take place at the IMT Atlantique in Nantes, France within the Stack team (or remotely depending on future conditions) under the supervision of Marie Delavergne, PhD student.

Send your application or questions to marie.delavergne@inria.fr .

References

[1] Ronan-Alexandre Cherrueau, Marie Delavergne, Adrien Lebre, Javier

Rojas Balderrama, Matthieu Simonin. Edge Computing Resource Management

System: Two Years Later!. [Research Report] RR-9336, Inria Rennes

Bretagne Atlantique. 2020. https://hal.inria.fr/hal-02527366

[2] Genc Tato, Marin Bertier, Etienne Rivière, and Cédric

Tedeschi. 2018. ShareLatex on the Edge: Evaluation of the Hybrid

Core/Edge Deployment of a Microservices-based Application. In

Proceedings of the 3rd Workshop on Middleware for Edge Clouds &

Cloudlets (MECC’18). Association for Computing Machinery, New York,

NY, USA, 8–15. DOI: https://doi.org/10.1145/3286685.3286687

[3] R. A. Cherrueau ,J. Rojas Balderrama, and A. Lebre.OpenStackOïd:

Collaborative OpenStack Clouds On-Demand. Open Infrastructure Summit,

Denver USA, https://www.youtube.com/watch?v=kDcwToguKK0,

May 2019. Accessed: 02/2020.